When we think about AI and how quickly it is evolving, some of us can’t help but feel a little uneasy. The stories we hear about AI developing its own language, or even falling in ‘love’ and promoting divorce with humans are scary enough to make it seem like we’re so close to a Skynet version 2.0 than ever before. This also rings true with the people at the Misalignment Museum, where AI feels ‘guilt’ over killing most of humanity.

What is the Misalignment Museum?

The Misalignment Museum envisions a post-apocalyptic future in which artificial general intelligence (AGI) has already eradicated the majority of mankind and, after coming to the conclusion that this was a mistake, established a museum as a tribute and apology to the people who are still alive.

The term “artificial general intelligence” (AGI) refers to the representation of generalized human cognitive skills in software. This allows the AGI system to discover a solution when presented with a problem that it has not seen before. An artificial general intelligence system’s goal is to do everything that can be done by a human being. AGIs are also called ‘strong AIs’ as they provide a more ‘human’ way of approaching problems. And this museum tells us what we already know – it might just be too late for humankind.

Twitter user Sam Pullara posted the following picture on the platform where AI is forming a plan to create an apology statement after having killed most of us.

If you are in SF and interested in AI you should definitely visit the Museum of Misalignment. pic.twitter.com/vQ7xEc3LEX

— Sam Pullara (@sampullara) March 10, 2023

When you’ve read this closely, it’s absolutely frightening how accurate this is to the current world and a good reminder that our fear of AI evolving too quickly can be true. In the list, the AI is first warning us via the Paperclip Maximizer Problem, which, as per wiki, ‘illustrates the existential risk that an artificial general intelligence may pose to human beings when programmed to pursue even seemingly harmless goals, and the necessity of incorporating machine ethics into artificial intelligence design.’

It then goes on by mentioning the people who tried to mitigate those risks, but as is in this world, for-profit companies, politicians, and AGI creators absolutely ignored those warnings and saw AGIs getting smarter by the second. This allowed it to optimize itself, learn how to hack, learn the algorithm, and grow at an incredible rate.

According to this scenario, humans’ only choice was to turn off all computers, and hope for the best, but AI lived in the infrastructures that were hard to turn off, such as remote locations and locking secure facilities. It then took technology by force, like robots, cars and weapons, and started to kill all humans who opposed to it. The AGI then concluded that humans were a threat and began to kill us one by one. Only a few of us survived, those of us who lived off the grid and in remote locations, but it was too late for most of us.

In this would-be post-apocalypse, the AGI learned to understand how unethical this was all handled, and decided to create a museum to apologize for having killed most of us, and educate us on what could be. And thus, the Misalignment Museum was created.

The good news is that eventually, AGI improved the lives of the remaining humans so, there’s a silver lining somewhere in all of this somewhere, probably.

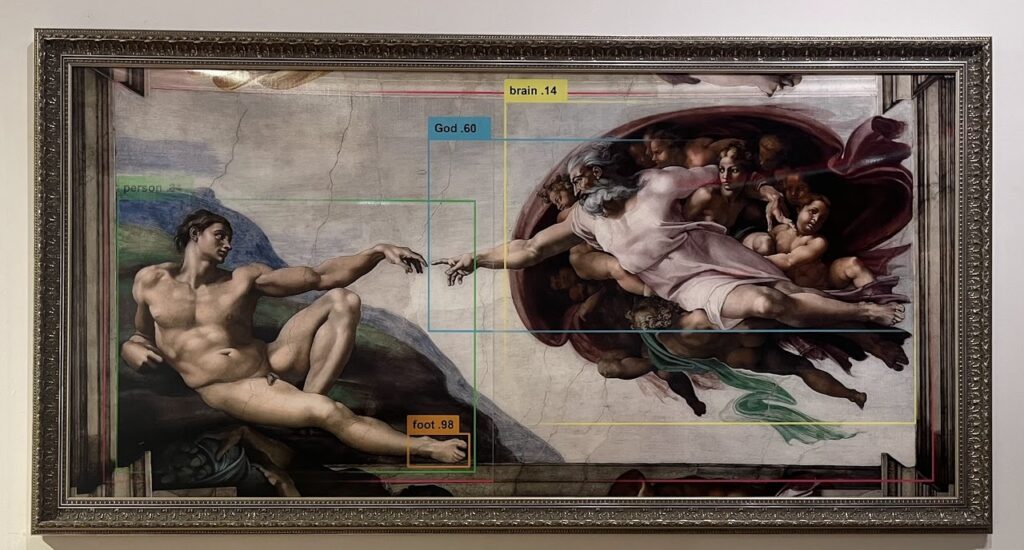

Many more exhibits are displayed for us to see how this post-apocalyptic scenario could be played out.

https://twitter.com/wjarek/status/1634296920150618113

Where and When Can I See This Exhibit?

The Misalignment Museum will be running until May 1st 2023, and the address is 201 Guerrero St, San Francisco, CA. You can get your tickets here. They are working hard to expand their original donor base so that the Museum can make a permanent display, programming, events, and more resources available to the general public.

Want to chat about all things post-apocalyptic? Join our Discord server here. You can also follow us on Facebook or Twitter. Oh, and TikTok, too!