The Doomsday Clock was first introduced in 1947, at the beginning of the Cold War. The clock’s hands are set to a certain time, with midnight representing the point of global catastrophe, or “doomsday.” The closer the clock is to midnight, the closer the world is considered to be to a potential global disaster. In 2019, the members of the Science and Security Board set the Doomsday Clock at two minutes to midnight. In 2022, it was set to 100 seconds to midnight. Last year, the Clock was set to 90 seconds to midnight, mainly due to the threats from Russia to deploy nuclear weapons in the conflict in Ukraine.

The new announcement made today confirmed that the 2024 Doomsday Clock is still set at the same time: 90 seconds to midnight. The bulletin of the Atomic Scientist comments that this time is set because “humanity continues to face an unprecedented level of danger.”

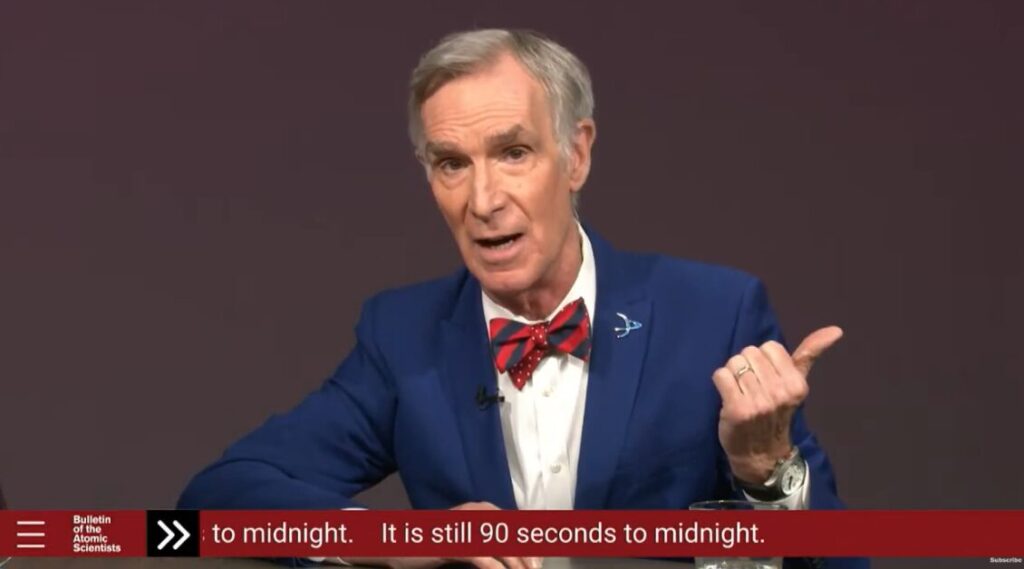

The Bulletin of the Atomic Scientists hosted a live virtual news conference featuring special guest Bill Nye at 10:00 a.m. EST/1500 GMT on Tuesday, January 23rd, 2024, to announce whether the time on the iconic “Doomsday Clock” would change.

Let’s take a look at the announcement.

2024 Doomsday Clock Statement

Nuclear Risk

The past year was marked by tense interactions among major global powers as they actively pursued nuclear modernization initiatives while the framework for nuclear arms control deteriorated. Against this backdrop, the Bulletin of the Atomic Scientists comments that it is challenging to identify a clear path toward a peaceful and sustainable resolution to Russia’s conflict with Ukraine. Persistent worries persist about the potential deployment of nuclear weapons by Russia in this ongoing dispute.

In March 2023, President Putin made an announcement about deploying tactical nuclear weapons in Belarus, though there is ambiguity surrounding the actual relocation of any weapons. Subsequently, in October 2023, Russia’s Duma voted to withdraw Moscow’s endorsement of the Comprehensive Nuclear Test Ban Treaty. Similar to the United States, Russia maintains its status as a signatory to the treaty.

Additional nuclear crises persist. North Korea’s nuclear weapons program is steadily progressing, and in April 2023, the country asserted a successful test of a solid-fuel intercontinental ballistic missile named Hwasong-8. These missiles offer increased mobility and quicker launch capabilities, enhancing their survivability. Consequently, South Korea has requested an expanded role in the United States’ nuclear commitments to safeguard the southern region, a move that might not necessarily diminish South Korea’s inclination to pursue its own deterrent capabilities.

India and Pakistan persist in amassing weapons and delivery systems, and there have been no positive advancements concerning the nuclear forces, postures, and production of fissile material in both nations.

Read more about the nuclear risks here.

Climate Change

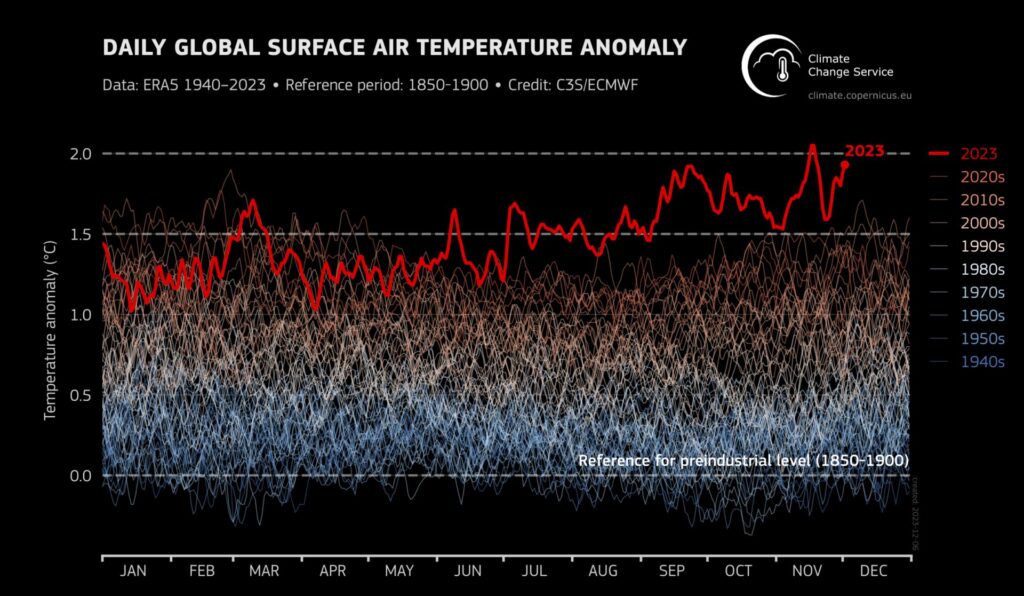

The diverse climate impacts observed globally in 2023, such as extensive wildfires, widespread flooding, and prolonged heat waves, along with the ongoing increase in greenhouse gas emissions, raise significant concerns. However, there is also noteworthy progress in the clean-energy transition, evident in increased deployment, investment, and the implementation of policies aimed at reducing carbon dioxide emissions.

Record-breaking sea-surface temperatures were observed both globally and in the North Atlantic. Additionally, Antarctic sea ice recorded its lowest daily relative extent since the start of satellite data, measuring approximately 2.67 million square kilometers below the 1991–2023 average—an area roughly equivalent to the size of Kazakhstan.

In 2022, carbon dioxide equivalent emissions rose by 1.5 percent compared to 2021, reaching a historic peak of 57.5 gigatons. The timeframe for enhancing future commitments and putting into effect existing agreements to restrict global warming within the 1.5-degree target is diminishing rapidly.

While there are encouraging signs in the expansion of renewable energy, it is imperative for the global economy to attain net zero carbon dioxide emissions to effectively curb further warming. The sooner this objective is accomplished, the less human suffering will result from climate disruption.

However, the ongoing increase in carbon dioxide emissions underscores a concerning reality: the world has not yet embarked on a trajectory that ensures a transition to net-zero emissions.

Read more about the climate change risks here.

Biological Threats

The capabilities of AI are growing exponentially, raising concerns and controversies regarding the potential for generative AI to offer information enabling states, subnational groups, and non-state actors to develop more harmful and transmissible biological agents. Presently, available evidence indicates that, with generative AI, the acquisition of known harmful agents is more probable than the creation of entirely new ones. Nevertheless, it is evident that generative AI can be utilized as a tool to enhance existing pathogens. It would be unwise to dismiss the possibility of AI-assisted design contributing to the development of novel biological agents and weapons in the future.

US President Joe Biden signed an executive order about “safe, secure, and trustworthy AI” in October 2023 to make people aware “against the risks of using AI to engineer dangerous biological materials by developing strong new standards for biological synthesis screening.” On the subject of the risks associated with the misuse of AI in the life sciences, transparency might not be advisable. An instance of concern is the revelation that the public release of comprehensive information about large language models allowed hackers to circumvent safeguards effortlessly, obtaining “nearly all key information needed” to recreate the 1918 pandemic influenza virus.

Terrorist organizations persist in their pursuit of biological agents and weapons, raising concerns globally about the potential deployment of biological agents by such groups, particularly in the Middle East and other regions. The utilization of a biological agent would likely trigger robust international intervention and, if accurately attributed, result in widespread condemnation and coordinated actions against the country or group responsible for initiating the attack.

Read more about the biological threats here.

AI and Other Disruptive Technologies

The standout advancement in the disruptive technology realm last year was the remarkable progress in generative artificial intelligence. The sophistication of text generators, particularly those based on large language models like GPT-4, prompted certain esteemed experts to voice apprehensions about potential existential risks associated with rapid advancements in the field.

Linking the metaphorical nuclear launch button to ChatGPT could indeed present an existential threat to humanity, but it would emanate from the potential use of nuclear weapons rather than any inherent risk from AI itself. Certainly, poor decisions to grant AI control over critical physical systems could indeed present existential threats to humanity. The responsible and ethical deployment of AI systems is crucial to avoid unintended consequences and potential catastrophic outcomes.

The military application of AI is rapidly advancing, with widespread usage observed in intelligence, surveillance, reconnaissance, simulation, and training. Generative AI is also expected to play a role in information operations. A significant area of concern is the development of lethal autonomous weapons capable of identifying and engaging targets without human intervention. The United States is notably expanding its use of AI on the battlefield, with plans to deploy thousands of autonomous (non-nuclear) weapon systems within the next two years.

Fortunately, numerous countries are acknowledging the significance of regulating AI and are initiating measures to mitigate its potential for causing harm.

Read more about AI and other disruptive technologies here.

About the Bulletin of the Atomic Scientists

The Bulletin of the Atomic Scientists functions as a media organization, maintaining a free-access website and a bimonthly magazine. The organization’s iconic Doomsday Clock and regular events serve to provide the public, policymakers, and scientists with the necessary information to reduce man-made threats to human existence. The Bulletin concentrates on three main areas: nuclear risk, climate change, and disruptive technologies, including advancements in biotechnology. What unites these subjects is a fundamental belief that, as humans created them, they can be controlled.

Want to chat about all things post-apocalyptic? Join our Discord server here. You can also follow us by email here, on Facebook, or Twitter. Oh, and TikTok, too!